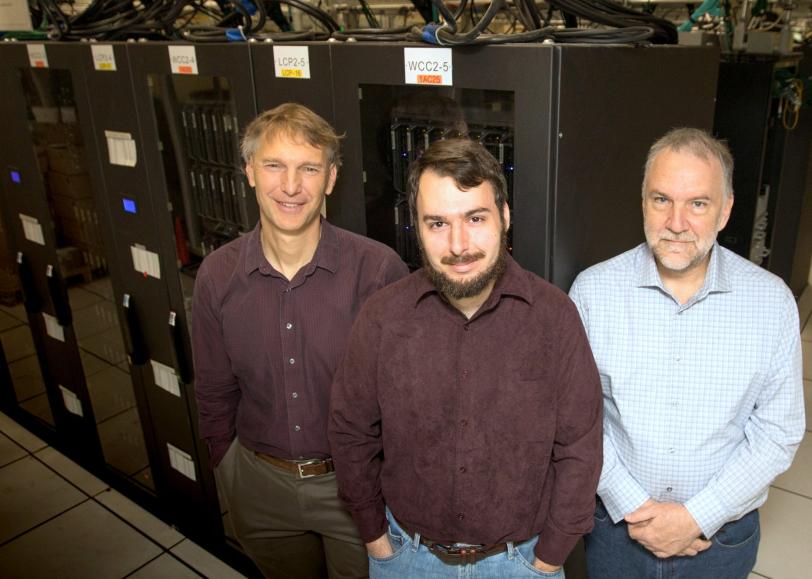

Q&A: Alan Heirich and Elliott Slaughter Take On SLAC’s Big Data Challenges

As members of the lab’s Computer Science Division, they develop the tools needed to handle ginormous data volumes produced by the next generation of scientific discovery machines.

By Manuel Gnida

As the Department of Energy’s SLAC National Accelerator Laboratory builds the next generation of powerful instruments for groundbreaking research in X-ray science, astronomy and other fields, its Computer Science Division is preparing for the onslaught of data these instruments will produce.

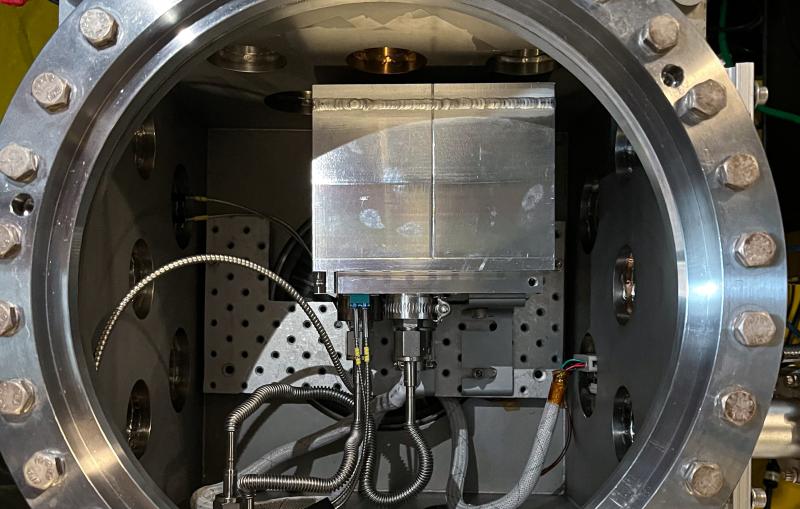

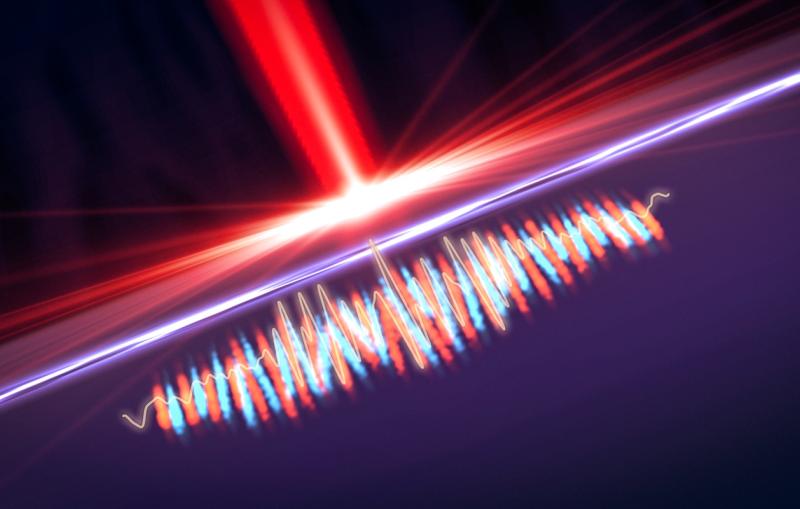

The division’s initial focus is on LCLS-II, an upgrade to the Linac Coherent Light Source (LCLS) X-ray laser that will fire 8,000 times faster than the current version. LCLS-II promises to provide completely new views of the atomic world and its fundamental processes. However, the jump in firing rate goes hand and in hand with an explosion of scientific data that would overwhelm today’s computing architectures.

In this Q&A, SLAC computer scientists Alan Heirich and Elliott Slaughter talk about their efforts to develop new computing capabilities that will help the lab cope with the coming data challenges.

Heirich, who joined the lab last April, earned a PhD from the California Institute of Technology and has many years of experience working in industry and academia. Slaughter joined last June; he’s a recent PhD graduate from Stanford University, where he worked under the guidance of Alex Aiken, professor of computer science at Stanford and director of SLAC’s Computer Science Division.

What are the computing challenges you’re trying to solve?

Heirich: The major challenge we’re looking at now is that LCLS-II will produce so much more data than the current X-ray laser. Data rates will increase 10,000 times, from about 100 megabytes per second today to a terabyte per second in a few years. We need to think about the computing tools and infrastructure necessary to take control over that enormous future data stream.

Slaughter: Our development of new computing architectures is aimed at analyzing LCLS-II data on the fly, providing initial results within a minute or two. This allows researchers to evaluate the quality of their data quickly, make adjustments and collect data in the most efficient way. However, real-time data analysis is quite challenging if you collect data with an X-ray laser that fires a million pulses per second.

How can real-time analysis be achieved?

Slaughter: We won’t be able to do all this with just the computing capabilities we have on site. The plan is to send some of the most challenging LCLS-II data analyses to the National Energy Research Scientific Computing Center (NERSC) at DOE’s Lawrence Berkeley National Laboratory, where extremely fast supercomputers will analyze the data and send the results back to us within minutes.

Our team has joined forces with Amedeo Perazzo, who leads the LCLS Controls and Data Systems Division, to develop the system that will run the analysis. Scientists doing experiments at LCLS will be able to define the details of that analysis, depending on what their scientific questions are.

Our goal is to be able to do the analysis in a very flexible way using all kinds of high-performance computers that have completely different hardware and architectures. In the future, these will also include exascale supercomputers that perform more than a billion billion calculations per second – up to a hundred times more than today’s most powerful machines.

Is it difficult to build such a flexible computing system?

Heirich: Yes. Supercomputers are very complex with millions of processors running in parallel, and we need to figure out how to make use of their individual architectures most efficiently. At Stanford, we’re therefore developing a programming system, called Legion, that allows people to write programs that are portable across very different high-performance computer architectures.

Traditionally, if you want to run a program with the best possible performance on a new computer system, you may need to rewrite significant parts of the program so that it matches the new architecture. That’s very labor and cost intensive. Legion, on the other hand, is specifically designed to be used on diverse architectures and requires only relatively small tweaks when moving from one system to another. This approach prepares us for whatever the future of computing looks like. At SLAC, we’re now starting to adapt Legion to the needs of LCLS-II.

We’re also looking into how we can visualize the scientific data after they are analyzed at NERSC. The analysis will be done on thousands of processors, and it’s challenging to orchestrate this process and put it together into one coherent visual picture. We just presented one way to approach this problem at the supercomputing conference SC17 in November.

What’s the goal for the coming year?

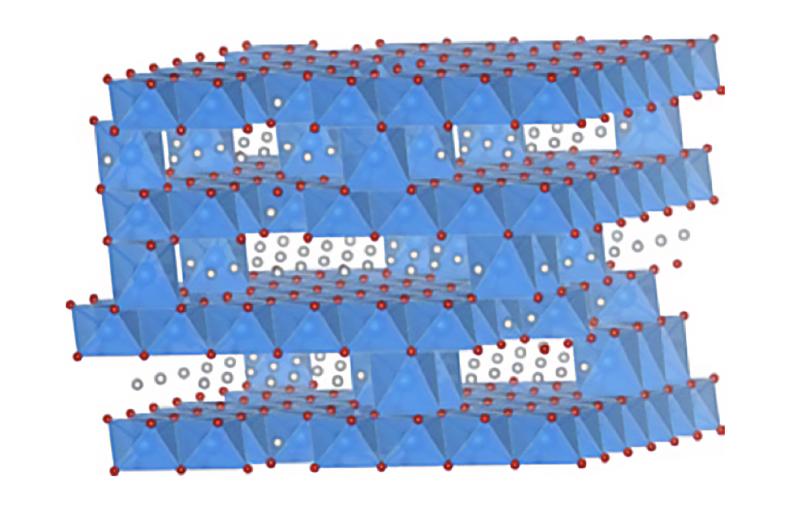

Slaughter: We’re working with the LCLS team on building an initial data analysis prototype. One goal is to get a first test case running on the new system. This will be done with X-ray crystallography data from LCLS, which are used to reconstruct the 3-D atomic structure of important biomolecules, such as proteins. The new system will be much more responsive than the old one. It’ll be able to read and analyze data at the same time, whereas the old system can only do one or the other at any given moment.

Will other research areas besides X-ray science profit from your work?

Slaughter: Yes. Alex is working on growing our division, identifying potential projects across the lab and expanding our research portfolio. Although we’re concentrating on LCLS-II right now, we’re interested in joining other projects, such as the Large Synoptic Survey Telescope (LSST). SLAC is building the LSST camera, a 3.2-gigapixel digital camera that will capture unprecedented images of the night sky. But it will also produce enormous piles of data – millions of gigabytes per year. Progress in computer science is needed to efficiently handle these data volumes.

Heirich: SLAC and its close partnership with Stanford Computer Science make for a great research environment. There is also a lot of interest in machine learning. In this form of artificial intelligence, computer programs get better and more efficient over time by learning from the tasks they performed in the past. It’s a very active research field that has seen a lot of growth over the past five years, and machine learning has become remarkably effective in solving complex problems that previously needed to be done by human beings.

Many groups at SLAC and Stanford are exploring how they can exploit machine learning, including teams working in X-ray science, particle physics, astrophysics, accelerator research and more. But there are very fundamental computer science problems to solve. As machine learning replaces some conventional analysis methods, one big question is, for example, whether the solutions it generates are as reliable as those obtained in the conventional way.

LCLS and NERSC are DOE Office of Science user facilities. Legion is being developed at Stanford with funding from DOE’s ExaCT Combustion Co-Design Center, Scientific Data Management, Analysis and Visualization program and Exascale Computing Project (ECP) as well as other contributions. SLAC’s Computer Science Division receives funding from the ECP.

For questions or comments, contact the SLAC Office of Communications at communications@slac.stanford.edu.

SLAC is a multi-program laboratory exploring frontier questions in photon science, astrophysics, particle physics and accelerator research. Located in Menlo Park, Calif., SLAC is operated by Stanford University for the U.S. Department of Energy's Office of Science.

SLAC National Accelerator Laboratory is supported by the Office of Science of the U.S. Department of Energy. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.