How to host an astronomically large data set

Managing the unprecedented amount of data that will soon stream from Rubin Observatory means more than buying tons of hard drives. SLAC scientist Richard Dubois explains what will go into Rubin’s U.S. data facility.

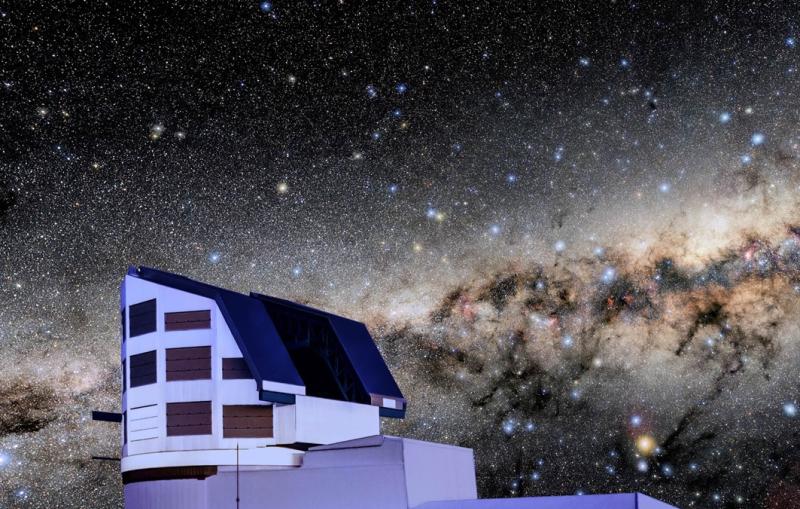

When the Vera C. Rubin Observatory’s Legacy Survey of Space and Time (LSST) kicks off in a few years, it will produce a staggering amount of data about the Southern night sky – 20 terabytes worth of images, or roughly the total amount collected by the Sloan Digital Sky Survey, every clear night for a decade. Then, scientists interested in everything from asteroids to dark energy will have to access that data and do something with it.

Rubin scientists have had to think carefully about how to get all that data down from the Chilean mountain, where it will first be gathered and sent off to a data facility at the Department of Energy’s SLAC National Accelerator Laboratory, along with two others in the United Kingdom and France.

But what does one do with that much data once it arrives at a data facility – and what is a data facility, anyway? Here, SLAC senior staff scientist Richard Dubois, who is heading up SLAC’s efforts to design and build the facility, explains.

What exactly is a data facility?

A data facility is really an umbrella for three functions. The most immediate function is that every 37 seconds an image will be taken during the night in Chile. One of our missions is that within a minute, we will have done the analysis to say if something in the sky has changed, and then we send out alerts to the community. Then you want a fast turnaround, so if you need to turn Keck Observatory’s telescopes in Hawaii to look at something in more detail, or something like that, they’re told pretty promptly.

But given the depth of imaging, there will be a lot of alerts. We’re projecting 10 million alerts a night, so there’s a whole system being set up to sort the wheat from the chaff. And different communities are interested in different things – there are the asteroid people, there’s planetary research. That’s a quick turnaround, and it has to work like clockwork, but it’s actually not that big a deal – it involves, like, 1,000 computer cores.

That’s not that big a deal?

Not resource-wise, but you have to be efficient because you’ve got six seconds to get the data to SLAC from Chile, and then you’ve got 50 seconds to issue the alerts. It’s going to take good orchestration to make sure that as soon as the image hits the front door, 1,000 machines go into action. But it’s not like we need 10,000 or 50,000 cores.

So alerting other facilities that they might need to take a look at something going on, a supernova say, is one goal. What else does the data facility need to do?

The next thing is that every year, we’ll gather up all the data taken to that point and reprocess it. We expect the data to basically arrive linearly – every 37 seconds we take a 2- or 3-gigapixel picture. That’s a pretty predictable data set size.

But we’re running a three-ring circus to do the processing. We’ve arranged with the data facilities in France and the U.K. to take a fraction of the data and process it, and then we’ll reassemble it at SLAC to present it. So, France will do half, the U.K. will do a quarter, and the U.S. will do a quarter, and then the U.S. data facility at SLAC will host all of the data from all of the processing.

France will also keep a copy of all the raw data, both for their own science purposes and as a disaster archive – if the Big One hits and SLAC is damaged, there’s a copy of that data somewhere else.

You’ve hinted at the third goal: Since there’s so much data, you need a new way for people to access it.

Right, the third bit is giving scientists access to this data. The data itself will be at SLAC, and we’ve built a science platform that will be their entry point to look at that data. It’s a portable toolkit for accessing the data, and at the moment, we’re comparing a cloud option versus hosting the science platform at SLAC.

How will researchers use the science platform?

Based on how we think people want to work, a major element will be Rubin’s object catalog. An object is a galaxy, a star, a streak, an asteroid and so on. The processing happens, these things are identified and then they go into big databases with metadata – where is the object, when did we see it, how big is it, what energy of light came out of it, those kinds of things. We think the vast majority of researchers requesting access would be making database queries. We’ll have a graphical user interface to help them form the queries, and then there will be other tools for scientists to issue requests.

There’s an intermediate class of people who want to look at little patches of the sky. We can deliver that data over the network. But, then there will be the adventuresome sorts, who want to go back and look at raw images. We don’t know how many of them there will be or how much data they’ll use, so we’re trying to think of different ways to serve them. Part of what we’re doing before LSST starts is understanding these access patterns, and that’s going to inform us about what’s going to work best and what isn’t going to work for the science platform.

Looking at the data facility overall, how big a deal is this?

At the end of 10 years, we’ll be sitting on more data than SLAC has ever seen to date. Processing that is a big deal. We’ve budgeted five months each year just to do the data releases. Just getting our hands on a few hundred petabytes of storage and collecting the processed data back from France and the U.K. is going to be an exercise on its own. These are not trivial activities.

Rubin Observatory is a joint initiative of the National Science Foundation and the Department of Energy’s Office of Science. Its primary mission is to carry out the Legacy Survey of Space and Time, providing an unprecedented data set for scientific research supported by both agencies. Rubin is operated jointly by NSF’s NOIRLab and SLAC. NOIRLab is managed for NSF by the Association of Universities for Research in Astronomy and SLAC is operated for DOE by Stanford University.

For questions or comments, contact the SLAC Office of Communications at communications@slac.stanford.edu.

SLAC is a vibrant multiprogram laboratory that explores how the universe works at the biggest, smallest and fastest scales and invents powerful tools used by scientists around the globe. With research spanning particle physics, astrophysics and cosmology, materials, chemistry, bio- and energy sciences and scientific computing, we help solve real-world problems and advance the interests of the nation.

SLAC is operated by Stanford University for the U.S. Department of Energy’s Office of Science. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time.