SLAC Research Resumes at Upgraded Large Hadron Collider

ATLAS Experiment Continues Studies of Higgs Boson and Quest for New Physics

Research with the Large Hadron Collider (LHC) has officially resumed: After more than two years of upgrades and tests, the world’s largest particle accelerator at CERN, the European particle physics laboratory, began on June 3 to collect data at a new record energy that could hold the key to new scientific discoveries. To keep up with the boost in performance, researchers at the Department of Energy’s SLAC National Accelerator Laboratory have developed new technologies for ATLAS – one of two experiments involved in the 2012 discovery of the Higgs boson.

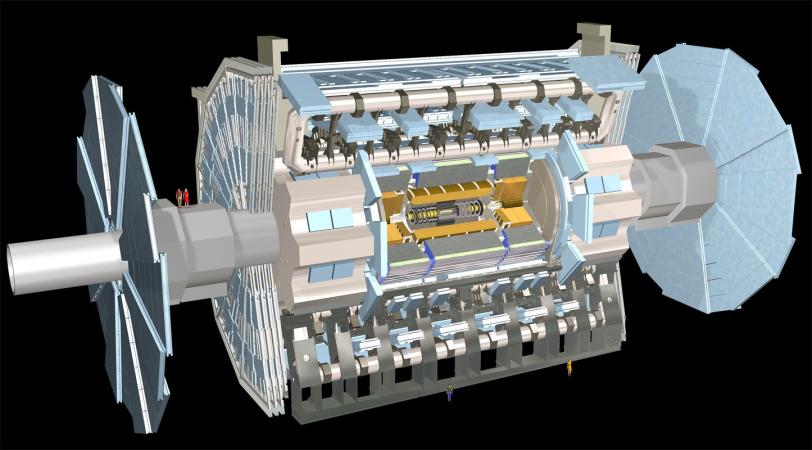

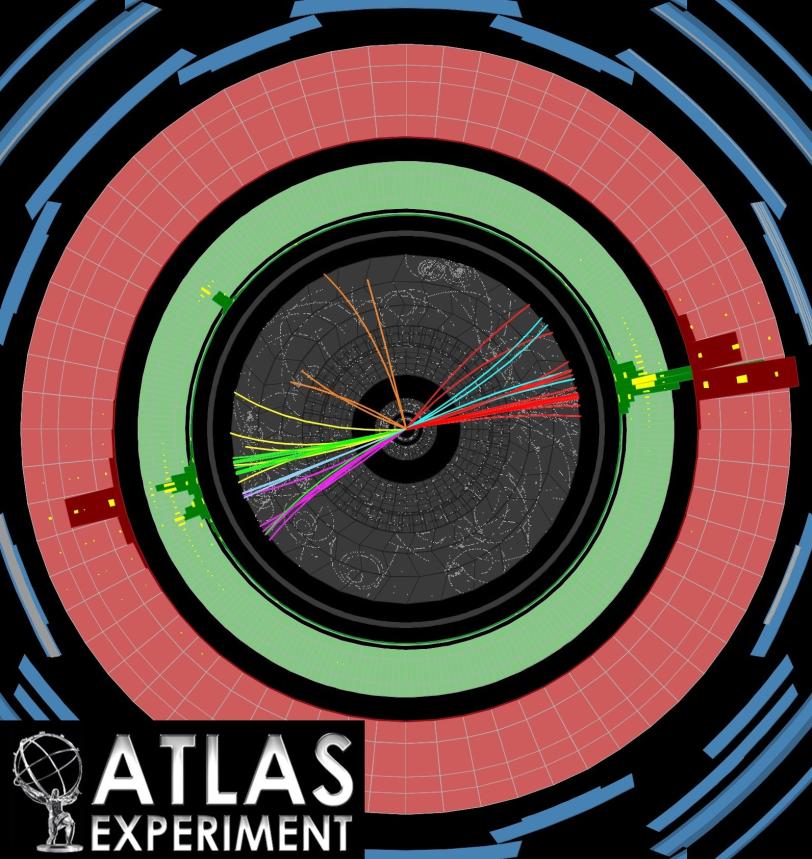

The ATLAS detector records the “debris” left behind when LHC’s highly energetic proton beams collide with each other – information scientists use to reconstruct the properties of nature’s fundamental particles and forces. Each proton beam of the upgraded accelerator will eventually carry the energy of a 400-tonne train traveling at about 90 miles per hour, releasing its energy in over 1 billion proton collisions per second and increasing LHC’s discovery potential.

“As part of the international ATLAS collaboration, SLAC worked on quite a few things during the long shutdown to get ready for the second run of the LHC,” says particle physicist Dong Su, who is in charge of SLAC’s ATLAS group. “We improved the detector hardware itself, its data acquisition and readout systems, and the way we select and analyze interesting collision events. We’re also very much involved in various challenging aspects of computing.”

Doing Science Closest to the Action

The 7,000-tonne ATLAS detector, which is 151 feet long and 82 feet in diameter, has a variety of subsystems layered around the detector’s center and is designed to keep track of the myriad particles generated in LHC’s powerful proton collisions.

Its innermost component, known as the pixel detector, plays a crucial role. Because its millions of pixels are located only inches away from where the proton beams collide with each other, it measures the tracks of emerging particles very precisely and is sensitive to short-lived collision products that decay before they reach layers located further out.

For the second LHC run, researchers added a new detector layer: With an average distance of only 1.3 inches, this Insertable B-Layer (IBL) sits closest to the proton beams and further improves the pixel detector’s tracking precision.

“SLAC was instrumental in designing, assembling and testing the IBL,” says SLAC pixel detector lead Philippe Grenier. “Besides being closer to the beam, IBL’s pixels are smaller and made of lighter materials compared to the older components, resulting in a better detector resolution.”

One quarter of IBL’s sensors are novel 3-D sensors that were initially conceived at the Stanford Nanofabrication Facility and further developed at SLAC. They are more resistant to radiation damage than the other IBL sensors and help researchers cope with the increased radiation at the very heart of ATLAS due to the LHC upgrade.

Getting to the Bottom of Particle Physics

With the improved pixel detector, researchers may be able to answer open questions about the Higgs boson. They want to understand, for instance, how it decays into other particles.

“Our theories predict several routes for this to happen, and some of these decays have been discovered in the first run,” says SLAC’s ATLAS researcher Michael Kagan. “However, more than half of the time, a Higgs boson should produce a pair of bottom quarks, but we haven’t seen this process yet because of large background signals. More data from the second run and the development of new analysis techniques will help us shed light on this piece of Higgs physics.”

Bottom quarks are fundamental particles that cannot exist by themselves: Once produced in the decay of a Higgs boson at the proton collision point, they immediately team up with other partners to form relatively long-lived, composite particles. These travel a very short distance from the collision point before they decay into a set of more stable secondary particles. The IBL will help ATLAS researchers identify these secondary particle tracks that begin in locations other than the collision point and are potential telltale signs of the Higgs decay into bottom quarks.

Bottom quarks are also central to searches for new physics such as supersymmetry – a class of theories that aims at filling gaps in our understanding of fundamental particles and forces. It predicts the existence of a whole set of undiscovered particles and could potentially help scientists understand invisible dark matter.

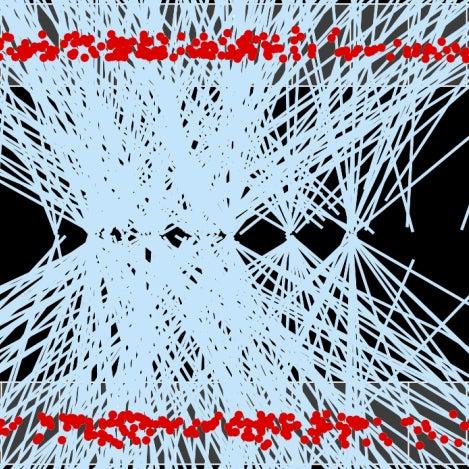

If it exists, supersymmetry should lead to an increase in LHC events that produce three or four bottom quarks. Yet, identifying these signatures is not trivial because instead of flying out in well-separated directions, collision products can bundle up in narrow particle streams, called jets.

“Jets are promising features for many searches for new phenomena,” says Ariel Schwartzman of SLAC’s ATLAS team. “But it’s very challenging to spot individual particles in them.” Over the past years, he and others have been developing techniques to analyze the internal structure of jets. So far, the analysis has not returned any unknown effects, but the search for new physics continues.

Dealing with a Flood of Complex Data

LHC’s upgrade not only increases its discovery potential, it also generates tremendous amounts of data that are increasingly challenging to handle.

Even before researchers get to do science with their data, they sift through millions of events per second and separate the wheat from the chaff. Although a so-called hardware trigger throws out a large amount of information that is obviously irrelevant, researchers still face 100,000 collisions per second that they need to take a closer look at.

“The solution is what we call the high-level trigger – a complex set of algorithms that runs on more than 20,000 computer cores and recognizes interesting physics,” says Rainer Bartoldus, the trigger and data acquisition lead of SLAC’s ATLAS group. “It selects only 1 percent of the data left by the hardware trigger and writes it to disc for later in-depth analysis. For the second run, we rebuilt this trigger, making it both smarter and more efficient.”

To keep up with the enormous rate at which the upgraded LHC generates large amounts of data, SLAC’s ATLAS team and Technology and Innovation Directorate also developed a new data acquisition system for the muon Cathode Strip Chambers – detector elements in the outer regions of ATLAS that track heavy versions of electrons known as muons. Utilizing latest technologies from the Silicon Valley, the new system is one-quarter of the size of the previous readout system but can handle four times larger data output rates.

SLAC also plays a crucial role in storing and analyzing the 1 billion gigabytes of data that the experiment produces every year as one of 80 ATLAS “tier 2” computing centers.

“About 60 percent of the entire computing power is currently spent on storage,” says Richard Mount, head of the computing group of SLAC’s elementary particle physics division, and ATLAS computing coordinator at CERN for the past two years. “We are working on reliable ways of reducing this amount by providing more remote access.”

This approach would free more computing resources for the demanding physics analysis of LHC events.

One particular analysis challenge is called pile-up: Since the collider’s proton beams are extremely bright and narrow, a beam’s single pass through ATLAS can cause multiple proton-proton collisions. In the first run, up to 20 proton-proton interaction points piled up along the detector center, and this number is expected to roughly double for the second run.

Since collision products coming from different interaction points can form particle jets, pile-up can obscure jets from single proton-proton collisions that potentially hint at new phenomena. “For many years now, SLAC has been playing a leading role in developing methods to subtract or suppress unwanted jets and to identify relevant events,” Schwartzman says.

Preparing for the Future

LHC’s second run may have just begun, but researchers are already preparing for the future.

In current silicon detectors like LHC’s pixel detector, sensors and electric circuits are separate parts, demanding a tedious and costly process of bonding them together. Future detector upgrades could benefit from CMOS technology, which is increasingly being used in digital cameras and other applications to simplify fabrication.

“Together with Stanford, we are working on two new CMOS prototypes that incorporate sensors and electric circuits in one piece,” says Christopher Kenney, who leads the sensor group of SLAC’s Technology and Innovation Directorate. “With the new technology, we may be able to build pixel detectors with better resolution that cost the same as the current standard.”

Su adds, “While the new CMOS sensor development pushes for better detector resolution and resistance to radiation, SLAC’s ATLAS upgrade efforts also take on a variety of other challenges, from high-speed data connections to high-bandwidth data acquisition to advanced trigger and timing technologies. Advances in these fields will prepare us for the exciting science exploration ahead of us at the LHC energy frontier.”

For questions or comments, contact the SLAC Office of Communications at communications@slac.stanford.edu.

SLAC is a multi-program laboratory exploring frontier questions in photon science, astrophysics, particle physics and accelerator research. Located in Menlo Park, Calif., SLAC is operated by Stanford University for the U.S. Department of Energy's Office of Science.

SLAC National Accelerator Laboratory is supported by the Office of Science of the U.S. Department of Energy. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.